Increasingly, businesses rely on algorithms that use data provided by users to make decisions that affect people. For example, Amazon, Google, and Facebook use algorithms to tailor what users see, and Uber and Lyft use them to match passengers with drivers and set prices. Do users, customers, employees, and others have a right to know how companies that use algorithms make their decisions? In a new analysis, researchers explore the moral and ethical foundations to such a right. They conclude that the right to such an explanation is a moral right, then address how companies might do so.

The analysis, by researchers at Carnegie Mellon University, appears in Business Ethics Quarterly, a publication of the Society for Business Ethics.

“In most cases, companies do not offer any explanation about how they gain access to users’ profiles, from where they collect the data, and with whom they trade their data,” explains Tae Wan Kim, Associate Professor of Business Ethics at Carnegie Mellon University’s Tepper School of Business, who co-wrote the analysis. “It’s not just fairness that’s at stake; it’s also trust.”

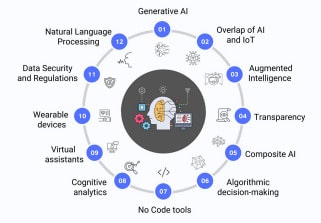

In response to the rise of autonomous decision-making algorithms and their reliance on data provided by users, a growing number of computer scientists and governmental bodies have called for transparency under the broad concept of algorithmic accountability. For example, the European Parliament and the Council of the European Union adopted the General Data Protection Regulation (GDPR) in 2016, part of which regulates the use of automatic algorithmic decision systems. The GDPR, which launched in 2018, affects businesses that process the personally identifiable information of residents of the European Union.

But the GDPR is ambiguous about whether it involves a right to explanation regarding how businesses’ automated algorithmic profiling systems reach decisions. In this analysis, the authors develop a moral argument that can serve as a foundation for a legally recognized version of this right.

In the digital era, the authors write, some say that informed consent—obtaining prior permission for disclosing information with full knowledge of the possible consequences—is no longer possible because many digital transactions are ongoing. Instead, the authors conceptualize informed consent as an assurance of trust for incomplete algorithmic processes.

Obtaining informed consent, especially when companies collect and process personal data, is ethically required unless overridden for specific, acceptable reasons, the authors argue. Moreover, informed consent in the context of algorithmic decision-making, especially for non-contextual and unpredictable uses, is incomplete without an assurance of trust.

In this context, the authors conclude, companies have a moral duty to provide an explanation not just before automated decision making occurs, but also afterward, so the explanation can address both system functionality and the rationale of a specific decision.

The authors also delve into how companies that run businesses based on algorithms can provide explanations of their use in a way that attracts clients while maintaining trade secrets. This is an important decision for many modern start-ups, including such questions as to how much code should be open source, and how extensive and exposed the application program interface should be.

Many companies are already tackling these challenges, the authors note. Some may choose to hire “data interpreters,” employees who bridge the work of data scientists and the people affected by the companies’ decisions.

“Will requiring an algorithm to be interpretable or explainable hinder businesses’ performance or lead to better results?” asks Bryan R. Routledge, Associate Professor of Finance at Carnegie Mellon’s Tepper School of Business, who co-wrote the analysis. “That is something we’ll see play out in the near future, much like the transparency conflict of Apple and Facebook. But more importantly, the right to explanation is an ethical obligation apart from bottom-line impact.”

Article: Businesses have a moral duty to explain how algorithms make decisions that affect people