A survey published by KPMG today suggests that a large number of organizations have increased their investments in AI during the pandemic to the point that executives are now concerned about moving too fast. In fact, most of the survey respondents cited a definite need for increased AI regulation.

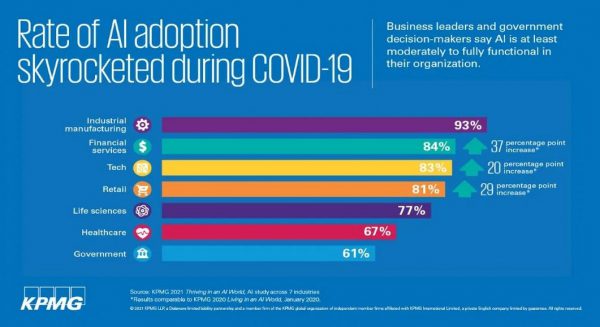

The survey covered 950 business decision-makers and/or IT decision-makers with at least a moderate amount of AI knowledge at companies with more than $1 billion in revenue. It finds AI technologies are most likely to be moderately to fully employed in industrial manufacturing (93%), financial services (84%), technology (83%), retail (81%), life sciences (77%), health care (67%), and government (61%) sectors.

Survey respondents all cited the pandemic as a factor that drove increased adoption of AI in the last year, though the degree varied by sector from industrial manufacturing (72%) to technology (57%), retail (53%), government (44%), financial services (42%), and health care and life sciences (37%).

Many respondents also noted that AI technology is moving too fast for their comfort in industrial manufacturing (55%), technology (49%), retail (49%), life sciences (47%), financial services (37%), government (37%), and health care (35%) sectors.

An overwhelming percentage of respondents also agreed that governments should be involved in regulating AI technology in the industrial manufacturing (94%), retail (87%), financial services (86%), life sciences (86%), technology (86%), health care (84%), and government (82%) sectors.

The fact that business leaders generally adverse to oversight are calling for more regulation indicates they are looking for greater clarity, KPMG AI principal Traci Gusher said. They are aware of the theoretical potential of AI but don’t necessarily want to be held accountable for the business outcomes it enables by a regulatory body at a later date, Gusher noted. Dire AI warnings issued by figures such as Elon Musk are for better or worse also increasing concern among business and IT leaders, Gusher said.

On the plus side, survey respondent said they expect the Biden administration will do more to help advance adoption of AI in the enterprise across the industrial manufacturing (90%), technology (88%), retail (85%), financial services (82%), life sciences (81%), government (79%), and health care (73%) sectors.

In general, organizations are getting more adept at successfully deploying AI in production environments, Gusher said. However, there is still plenty of room for improvement. Data science teams are starting to adopt best machine learning operations (MLOps) practices to train AI models faster and deploy their associated inference engines more consistently. The challenge is that as business conditions change, new data sources become available that require AI models to be continuously retrained. Updates to those models then need to be infused into application development and deployment processes based on widely employed DevOps principles. Most organizations have yet to achieve the level of AI maturity required to deploy and retrain AI models at that scale.

Thus, it’s only a matter of time before an AI model deployed today starts to generate suboptimal results. In fact, most organizations don’t have the governance mechanisms in place that would allow them to detect either potential bias in a model or any drift in the results being generated.

Most organizations are also still wrestling with AI explainability. A set of machine learning algorithms will generate slightly different results on different days as they become more familiar with a set of data. But it’s hard to distinguish between algorithms that are simply learning and what might be signs of drift caused by outlier data.

Regardless of these issues, investments in AI will continue unabated as a hedge against the next unanticipated Black Swan event that potentially disrupts business processes at a massive scale. “More Black Swan events are going to happen,” Gusher warned.

Most executives are always conscious of business risk, so AI is not being treated much differently than any other risk, Gusher noted. However, given all that might potentially go wrong, there’s no doubt the AI stakes are a lot higher.

Article: KPMG: AI adoption is accelerating in the pandemic