Businesses are poring over the details of the Artificial Intelligence Act (“AIA” or “Regulation”), the legislative proposal for regulating applications of artificial intelligence (AI) that the European Commission proposed back in April. Comments on the proposed legal framework continue to pour in. Professional groups, trade unions, businesses and the business community in Europe and across the Atlantic have been very vocal about their concerns and the likely impact of the proposed law on innovation in the EU. On December 8, 2021, four of the most influential robotics associations and innovators – the International Federation of Robotics, the VDMA Robotics + Automation Association, EUnited Robotics and REInvest Robotics – called on European policymakers to revisit and amend proposed regulations. In October 2021, MedTech Europe, the European trade association representing the medical technology industry, also called for a review of the proposed law. Back in August 2021, the United States Chamber of Commerce submitted its comment on the proposed regulation. Major European and U.S. companies individually and through trade associations such as Digital Europe have voiced their concerns about the draft law. Given that the EU’s proposed regulation will be fiercely debated in the coming months and that some version of it is likely to become law, it is important that businesses and other stakeholders understand some of the most contentious issues in the AIA. So, what do businesses need to know about this proposed regulation and what are the major bones of contention?

- The Choice of Regulatory Instrument

Between a range of legal instruments available to the Commission (e.g. voluntary labelling scheme, a sectoral ad-hoc approach, or a blanket approach), the Commission chose a ‘hard law’ approach that will impose binding legal obligations on providers and users of AI system. Many in the business community prefer no regulation or soft regulation; the Commission disagrees. The Commission’s choice of legislative instrument is justified by among other things, the growing and wide-ranging concerns related to AI and other emerging technologies. Concerns generally range from algorithmic bias, privacy violations, loss of job due to automation, and widening gaps between and within states, to ubiquitous surveillance and outright oppression. International law places on states the obligation to protect the rights and interests of individuals within their territory and/or jurisdiction. For example, the United Nations Guiding Principles on Business and Human Rights affirms the foundational principle that States, “must protect against human rights abuse within their territory and/or jurisdiction by third parties, including business enterprises” (emphasis added), and “should set out clearly the expectation that all business enterprises domiciled in their territory and/or jurisdiction respect human rights throughout their operations” (emphasis added).

- Scope and Extraterritoriality

Like the General Data Protection Regulation (GDPR) that preceded it, the regulation has major implications for providers and users of AI systems outside of the EU. Among others, the regulation applies to: (a) providers that place on the market or put into service AI systems in the European Union, irrespective of whether those providers are established within the Union or in a third country; and (b) providers and users of AI systems located in a third country, where the output produced by the system is used in the Union. The regulation’s wide territorial reach is a major concern to businesses outside the EU. Given the regulation’s territorial reach, Andre Franca, the director of applied data science at CausaLens, a British A.I. startup says “[t]he question that every firm in Silicon Valley will be asking today is, Should we remove Europe from our maps or not?” With today’s open economic borders and unprecedented data flows across borders, extraterritoriality in AI regulation is arguably inevitable. Rather than focus narrowly on the issue of extraterritoriality, discussions should center around issues such as regulatory cooperation, the corporate responsibility and accountability of all EU and non-EU AI providers and users, and how to promote regulatory interdependence around AI.

- Broad Definition of AI

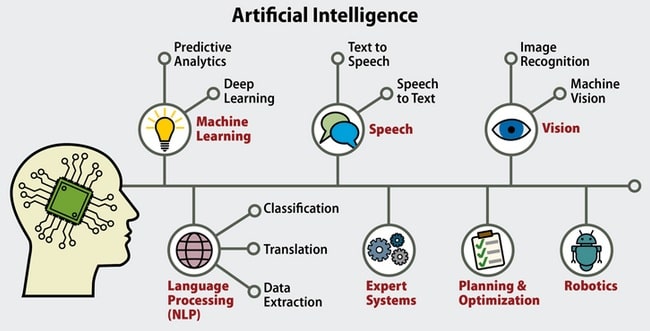

The regulation’s definition of “AI system” is problematic for businesses and other stakeholders. According to Article 3(1) of the draft law: “artificial intelligence system or AI system” means software that is developed with one or more of the approaches and techniques listed in Annex I and can, for a given set of human-defined objectives, generate outputs such as content, predictions, recommendations, or decisions influencing real or virtual environments. AI systems are designed to operate with varying levels of autonomy. An AI system can be used as a component of a product, also when not embedded therein, or on a stand-alone basis and its outputs may serve to partially or fully automate certain activities, including the provision of a service, the management of a process, the making of a decision or the taking of an action.

The techniques and approaches listed in Annex I include machine learning approaches, logic- and knowledge-based approaches, and statistical approaches, such as Bayesian estimation, search and optimization methods. In October 2021, MedTech Europe criticized the overly broad definition of AI systems and called for urgent clarification on key terms.

- Prohibited AI Systems

Reflecting its differentiated risk-based approach, the proposed law differentiates between uses of AI that create: (i) an unacceptable risk; (ii) a high risk, (iii) low or minimal risk, and (iv) no risk. AI systems that are deemed to pose unacceptable risk are banned outright. For example, the Regulation bans “the placing on the market, putting into service or use of an AI system that deploys subliminal techniques beyond a person’s consciousness in order to materially distort a person’s behaviour in a manner that causes or is likely to cause that person or another person physical or psychological harm.” First, businesses are understandably worried about ambiguities of key terms. What does the regulation mean by “subliminal techniques beyond a person’s consciousness,” and when do such uses “materially distort: a person’s behaviour? It is important that ambiguous terms are clarified to ensure a measure of clarity and predictability in the marketplace.

- High-Risk AI Systems – Obligation on Businesses

The regulation provides, in Annex III, an exhaustive list of AI systems considered high-risk. Subject to specified exceptions, AI systems considered high-risk are those that fall in the following areas:

- Remote biometric identification and categorization of natural persons

- Management and operation of critical infrastructure (e.g., the supply of water, gas, heating and electricity)

- Educational and vocational training;

- Employment, workers management, and access to self-employment

- Law enforcement

- Assess to and enjoyment of essential private services and public services and benefits

- Migration, asylum, and border-control management and

- Administration of justice.

The Regulation’s risk classification scheme raises interpretation issues and challenges for businesses in most sectors. Regarding the health sector, MedTech worries that the proposal’s broad definition of AI systems and risk classification “will result in any medical device software (placed on the market or put into service as a stand-alone product or component of hardware medical device) falling in the scope of the AI Act and being considered a high-risk AI system.”

- Penalties

The regulation specifies penalties for infringement and stipulates that the penalties provided for “shall be effective, proportionate, and dissuasive.” Non-compliant companies face fines that are based on their worldwide turnover. The regulation imposes penalties of up to 30 million Euro or 6% of global revenue, whichever is higher, for the most serious violations. The supply of incorrect, incomplete, or misleading information to competent authorities in reply to a request is subject to administrative fines of up to 10 million Euro or, if the offender is a company, up to 2% of its total worldwide annual turnover for the preceding financial year, whichever is higher. The U.S. Chamber of commerce takes the position that the penalties based on worldwide turnovers are “extraordinary” and believes that any penalties related to a violation of the regulation should be based instead on EU revenues. The good news is that some flexibility is built into the penalty regime. For example, Article 71(6) stipulates that when deciding on the amount of the administrative fine, all relevant circumstances of the specific situation are to be taken into account and due regard shall be given to a number of factors including, inter alia: (a) the nature, gravity and duration of the infringement and of its consequences; and (b) whether administrative fines have been already applied by other market surveillance authorities to the same operator for the same infringement.

- A New Institutional Framework

The draft law would put in place a regulatory regime that includes impact assessments, ongoing reporting obligations, and rigorous oversight and review mechanisms. At the EU level, the Regulation envisages the establishment of a new ‘European Artificial Intelligence Board.’ (Article 56(1)). The primary task of the new agency is to provide advice and assistance to the Commission in order to: (a) contribute to the effective cooperation of the national supervisory authorities and the Commission with regard to matters covered by the Regulation; (b) coordinate and contribute to guidance and analysis by the Commission and the national supervisory authorities and other competent authorities on emerging issues across the internal market with regard to matters covered by the Regulation; and (c) assist the national supervisory authorities and the Commission in ensuring the consistent application of the Regulation.

- Micro, small and medium-sized enterprises and Startups

There are concerns that the regulation is not attentive to the needs and challenges of small-scale providers and startups. Critics believe that the obligations associated with the so-called ‘High-Risk AI’ which includes extensive mandatory third-party certification requirement are very burdensome for small enterprises. The United Nations General Assembly (UNGA) recognizes that Micro-, Small and Medium-sized Enterprises (MSMEs) are crucial in achieving the Sustainable Development Goals and has encouraged states to adopt MSME-supporting policies. Indeed, the UNGA’s resolution 71/279 adopted in April 2017, designated June 27 as “Micro-, Small and Medium-sized Enterprises Day”. To be clear, the regulation does pay some attention to SMEs and startups; whether it goes far enough is the question. For example, Article 55 is titled ‘Measures for small-scale providers and users’ and obliges EU Member States to inter alia (i) provide small-scale providers and start-ups with priority access to the AI regulatory sandboxes, and (ii) take the specific interests and needs of the small-scale providers into account when setting the fees for conformity assessment. Furthermore, Article 71(1) stipulates those penalties “shall take into particular account the interests of small-scale providers and start-up and their economic viability.”

- Innovation and AI Regulatory Sandboxes

Businesses believe that the regulation will stifle innovation in Europe. Senior Policy Analyst, Benjamin Mueller argues that the regulation is “a damaging blow to the Commission’s goal of turning the EU into a global A.I. leader” and would create “a thicket of new rules that will hamstring companies hoping to build and use A.I. in Europe.”

In crafting the regulation, the Commission was not impervious of the need to enhance the competitiveness of EU firms and incentivize innovation. Whether the regulation struck the right balance will likely remain a matter of considerable debate. For example, Title V of the regulation (Measures in Support of Innovation) gives a nod to the concept of regulatory sandbox which the U.K. Financial Conduct Authority defines as “a ‘safe space’ in which businesses can test innovative products, services, business models, and delivery mechanisms without immediately incurring all the normal regulatory consequences of engaging in the activity in question.”

- Overregulation, Vagueness, and Overlaps

Critics are concerned about overlap with existing regulatory framework, conflicting obligations, and overregulation. In October 2021, MedTech Europe called for urgent clarification of the regulation in part because of concerns over potential overlaps with existing regulatory frameworks. In a 6 August 2021 press release, MedTech Europe called for particular attention to be paid to misalignment between provisions of the AIA and other legal frameworks including the Medical Device Regulation and the GDPR. While welcoming the EU’s risk-based approach to AI regulation, the Computer & Communications Industry Association, a trade body that represents a broad section of technology firms, hoped that the proposal would be “further clarified and targeted to avoid unnecessary red tape for developers and users.”

Conclusions

The Regulation has drawn criticisms as well as praises. Among other things, critics point to the Regulation’s vagueness, complex regulatory and technical requirements, and broad territorial scope. Supporters praise the Regulation for banning some AI systems outright and for the mandatory rules imposed on providers and users of AI including obligations for risk assessment, keeping a human in the loop, ensuring data quality, and designing risk-management plans. Peter van der Putten, director of AI solutions at cloud software firm Pegasystems says, “[t]his is a significant step forward for both EU consumers and companies that want to reap the benefits of AI but in a truly responsible manner.”

Disputes over how to regulate artificial intelligence should be expected and are likely to grow. There remain wide disagreements regarding the considerations – ethical, competitive, security, sustainable development, or others – that should drive AI regulation. There is also considerable debate around which values are negotiable and which are not, as far as AI regulation is concerned. Regarding AI regulation. are there values that are absolutely non-negotiable? Are there activities that should never be carried out using AI systems?

Between a blanket regulation of all AI systems and a differentiated risk-based approach to AI regulation, a nuanced risk-based approach is arguably more appropriate. A nuanced approach best addresses the tension between the goals of promoting innovation and economic growth on the one hand and protecting important public interests such as human rights and sustainable development. The challenge, however, is ensuring clarity around the definition of key terms, clarity as regards any risk classification, and striking the right balance between protecting fundamental rights and values on the one hand and promoting innovation and enhancing competitiveness, on the other; on these issues, the jury is still out. Ultimately, debates around whether Regulation offers a robust regulatory framework that provides legal coherence, certainty, and clarity to all actors, are likely to continue and businesses, big and small, are encouraged to lend their voice to the debate.

Article: Businesses and the EU’s proposed ‘Artificial Intelligence Act’: Major points of controversy