In 2019, Rafael Yuste successfully implanted images directly into the brains of mice and controlled their behavior. Now, the neuroscientist warns that there is little that can prevent humans from being next.

If used responsibly, neurotechnology — in which machines interact directly with human neurons — can be used to understand and cure stubborn illnesses like Alzheimer’s and Parkinson’s disease, and assist with the development of prosthetic limbs and speech therapy.

But if left unregulated, neurotechnology could also lead to the worst corporate and state excesses, including discriminatory policing and privacy violations, leaving our minds as vulnerable to surveillance as our communications.

Now a group of neuroscientists, philosophers, lawyers, human rights activists and policymakers are racing to protect that last frontier of privacy — the brain.

They are not seeking a ban. Instead, campaigners like Yuste, who runs the Neurorights initiative at Columbia University, call for a set of principles that guarantee citizens’ rights to their thoughts, and protection from any intruders, while taking advantage of any potential health benefits.

But they see plenty of reason to be alarmed about certain applications of neurotechnology, especially as it attracts the attention of militaries, governments and technology companies.

China and the U.S. are both leading research into artificial intelligence and neuroscience. The U.S. Defense department is developing technology that could be used to tweak memories.

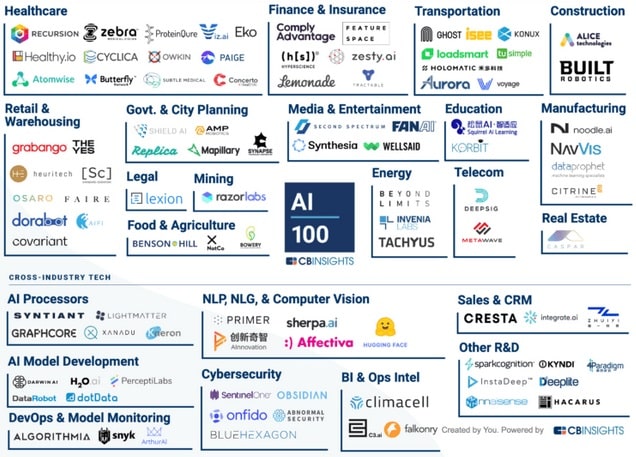

It’s not just scientists; firms, including major players like Facebook and Elon Musk’s Neuralink are making advances too.

Neurotech wearables are now entering the market. Kernel, an American company, has developed a headset for the consumer market that can record brain activity in real time. Facebook funded a project to create a brain-computer interface that would allow users to communicate without speaking. (They pulled out this summer.) Neuralink is working on brain implants, and in April 2021 released a video of a monkey playing a game with its mind using the company’s implanted chip.

“The problem is what these tools can be used for,” he said. There are some scary examples: Researchers have used brain scans to predict the likelihood of criminals reoffending, and Chinese employers have monitored employees’ brainwaves to read their emotions. Scientists have also managed to subliminally probe for personal information using consumer devices.

“We have on the table the possibility of a hybrid human that will change who we are as a species, and that’s something that’s very serious. This is existential,” he continued. Whether this is a change for good or bad, Yuste argues now is the time to decide.

Inception

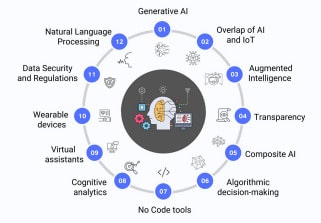

The neurotechnology of today cannot decode thoughts or emotions. But with artificial intelligence, that might not be necessary. Powerful machine learning systems could make correlations between brain activity and external circumstances.

“In order to raise privacy challenges it’s sufficient that you have an AI that is powerful enough to identify patterns and establish correlative associations between certain patterns of data, and certain mental states,” said Marcello Ienca, a bioethicist at ETH Zurich.

Researchers have already managed to use a machine learning system to infer credit card digits from a person’s brain activity.

Brain scans have also been used in the criminal justice system for diagnostics and for predicting which criminals are likely to offend again, both of which at this stage — like lie detector tests before it — offer limited and at times flawed information.

That could have dire consequences for people of color, who are already likely to suffer disproportionately from algorithmic discrimination.

“[W]hen, for example, lie detection or the detection of memory appears accurate enough according to the science, why would the public prosecutor say no to such kind of technology?” said Sjors Ligthart, who studies the legal implications of coercive brain reading at Tilburg University.

With brain implants in particular, experts say it’s unclear whether thoughts would be induced, or originate from the brain, which poses questions over accountability. “You cannot discern which tasks are being conducted by yourself and which thoughts are being accomplished by the AI, simply because the AI is becoming the mediator of your own mind,” Ienca said.

Where is my mind?

People have never needed to assert the authority of the individual over the thoughts they carry, but neurotechnology is prompting policymakers to do just that.

Chile is working on the world’s first law that would guarantee its citizens such so-called neurorights.

Senator Guido Girardi, who is behind Chile’s proposal, said the bill will create a similar registration system for neurotechnologies as for medicines, and using these technologies will need the consent of both the patient and doctors.

The goal is to ensure that technologies such as “AI can be used for good, but never to control a human being,” Girardi said.

In July, Spain adopted a nonbinding Charter for Digital Rights, meant to guide future legislative projects.

“The Spanish approach is to ensure the confidentiality and the security of the data that are related to these brain processes, and to ensure the complete control of the person over their data,” said Paloma Llaneza González, a data protection lawyer who worked on the charter.

“We want to guarantee the dignity of the person, equality and nondiscrimination, because you can discriminate people based on on his or her thoughts,” she said.

The Organisation for Economic Co-operation and Development, a Paris-based club of mostly rich countries, approved nonbinding guidelines on neurotechnology, and lists new rights meant to protect privacy and the freedom to think one’s own thoughts, known as cognitive liberty.

The problem is that it’s unclear whether existing legislation, which did not have neurotech in mind when drafted, is adequate.

“What we do need is to take another look at existing rights and specify them to neurotechnology,” said Ligthart. One target might be the European Convention of Human Rights, which ensures, for example, the right to respect private life, which could be updated to also include the right to mental privacy.

The GDPR, Europe’s strict data protection regime, offers protection for sensitive data, such as health status and religious beliefs. But a study by Ienca and Gianclaudio Malgieri at the EDHEC Business School in Lille found that the law might not cover emotions and thoughts.

Yuste argues that action is needed on an international level, and organizations such as the U.N. need to act before the technology is developed further.

“We want to do something a little bit more intelligent than wait until we have a problem and then try to fix it when it’s too late, which is what happened with the internet and privacy and AI,” Yuste said.

Today’s privacy issues are going to be “peanuts compared to what’s coming.”

Article: Machines can read your brain. There’s little that can stop them