A group of researchers from the University of Cambridge has trained a robotic “chef” to watch cooking videos and replicate the dishes. Cooking has always posed a challenge for robots, although they have been depicted in science fiction for years. While some companies have developed prototype robot chefs, none are currently available on the market, and they still fall behind human cooks in terms of skill. This breakthrough could make it easier and more affordable to deploy robot chefs in the future.

The researchers aimed to teach a robot chef to learn like humans do, through observation and incremental learning. They created a set of eight simple salad recipes and filmed themselves preparing them. Using a publicly available neural network, they trained the robot chef to recognize the different objects and actions involved in cooking.

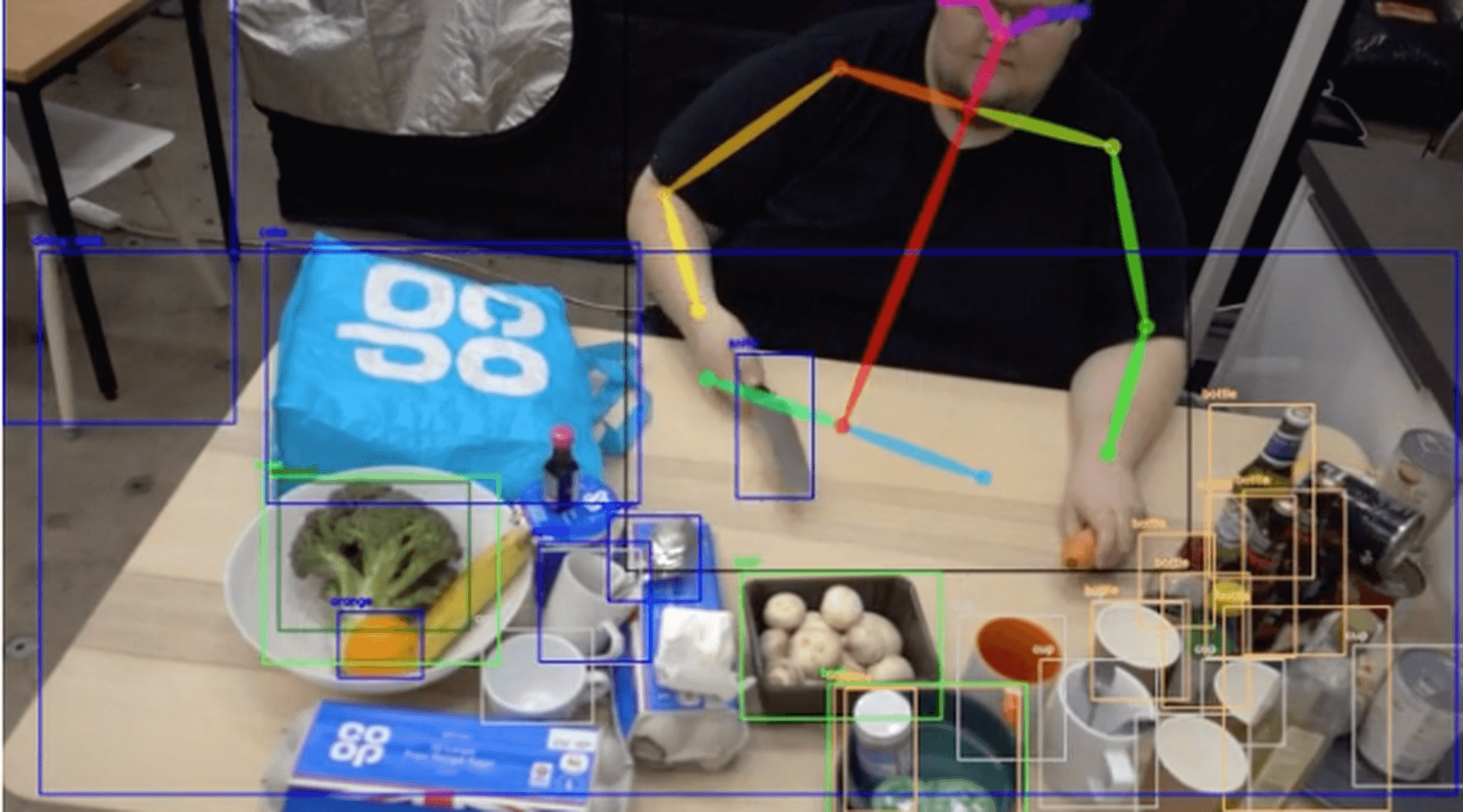

The robot analyzed each frame of the cooking videos using computer vision techniques. It identified objects such as fruits, vegetables, knives, and the human demonstrator’s body parts. By observing the ingredients and actions, the robot could determine which recipe was being prepared. It even detected slight variations in recipes and correctly identified them as variations rather than new recipes. The robot’s ability to understand the nuances of cooking was impressive.

Out of the 16 videos it watched, the robot correctly recognized the recipe 93 percent of the time, despite only detecting 83 percent of the human chef’s actions. Additionally, the robot successfully recognized and learned a new, ninth salad recipe, adding it to its repertoire.

“It’s amazing how much nuance the robot was able to detect,” says Grzegorz Sochacki from Cambridge’s Department of Engineering, in a university release. “These recipes aren’t complex – they’re essentially chopped fruits and vegetables, but it was really effective at recognizing, for example, that two chopped apples and two chopped carrots is the same recipe as three chopped apples and three chopped carrots.”

It’s important to note that the videos used to train the robot chef were not like the fast-paced and visually appealing food videos found on social media platforms. Those types of videos, with quick cuts and visual effects, would confuse the robot. For example, if a human demonstrator held a carrot in their hand, the robot would struggle to identify it. The carrot had to be held up so the robot could see the whole vegetable clearly.

“Our robot isn’t interested in the sorts of food videos that go viral on social media – they’re simply too hard to follow,” says Sochacki. “But as these robot chefs get better and faster at identifying ingredients in food videos, they might be able to use sites like YouTube to learn a whole range of recipes.”

The researchers envision a future where robot chefs can utilize platforms like YouTube to learn various recipes. As these robots become more proficient at identifying ingredients in food videos, they could potentially access and learn from various online resources.

The study is published in the journal IEEE Access.

Article: Robot ‘chef’ can recreate tasty dishes by watching cooking videos

Thank you for nice information. Please visit our web:

http://www.uhamka.ac.id

KAMPUS UNGGUL

KAMPUS UNGGUL

KAMPUS UNGGUL

M YUSUF S