The term artificial intelligence (AI) refers to computing systems that perform tasks normally considered within the realm of human decision making. These software-driven systems and intelligent agents incorporate advanced data analytics and Big Data applications. AI systems leverage this knowledge repository to make decisions and take actions that approximate cognitive functions, including learning and problem solving.

AI, which was introduced as an area of science in the mid 1950s, has evolved rapidly in recent years. It has become a valuable and essential tool for orchestrating digital technologies and managing business operations. Particularly useful are AI advances such machine learning and deep learning.

See our list of the top artificial intelligence companies

It’s important to recognize that AI is a constantly moving target. Things that were once considered within the domain of artificial intelligence – optical character recognition and computer chess, for example – are now considered routine computing. Today, robotics, image recognition, natural language processing, real-time analytics tools and various connected systems within the Internet of Things (IoT) all tap AI in order to deliver more advanced features and capabilities.

Helping develop AI are the many cloud companies that offer cloud-based AI services. Statistica projects that AI will grow at an annual rate exceeding 127% through 2025.

By then, the market for AI systems will top $4.8 billion dollars. Consulting firm Accenture reports that AI could double annual economic growth rates by 2035 by “changing the nature of work and spawning a new relationship between man and machine.” Not surprisingly, observers have both heralded and derided the technology as it filters into business and everyday life.

History of Artificial Intelligence: Duplicating the Human Mind

The dream of developing machines that can mimic human cognition dates back centuries. In the 1890s, science fiction writers such as H.G. Wells began exploring the concept of robots and other machines thinking and acting like humans.

It wasn’t until the early 1940s, however, that the idea of artificial intelligence began to take shape in a real way. After Alan Turing introduced the theory of computation – essentially how algorithms could be used by machines to produce machine “thinking” – other researchers began exploring ways to create AI frameworks.

In 1956, researchers gathering at Dartmouth College launched the practical application of AI. This included teaching computers to play checkers at a level that could beat most humans. In the decades that followed, enthusiasm about AI waxed and waned.

In 1997, a chess-playing computer developed by IBM, Deep Blue, beat reigning world chess champion, Garry Kasparov. In 2011, IBM introduced Watson, which used far more sophisticated techniques, including deep learning and machine learning, to defeat two top Jeopardy! champions.

Although AI continued to advance over the next few years, observers often cite 2015 as the landmark year for AI. Google Cloud, Amazon Web Services, and Microsoft Azure and others began to step up research and improve natural language processing capabilities, computer vision and analytics tools.

Today, AI is embedded in a growing number of applications and tools. These range from enterprise analytics programs and digital assistants like Siri and Alexa to autonomous vehicles and facial recognition.

Different Forms of Artificial Intelligence

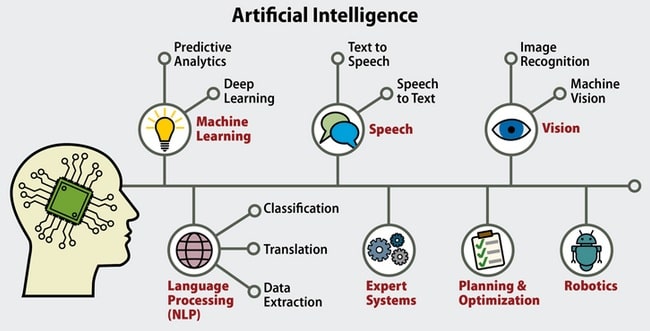

Artificial intelligence is an umbrella term that refers to any and all machine intelligence. However, there are several distinct and separate areas of AI research and use – though they sometimes overlap. These include:

- General AI. These systems typically learn from the world around them and apply data in a cross-domain way. For example, DeepMind, now owned by Google, used a neural network to learn how to play video games similar to how humans play them.

- Natural Language Processing (NLP). This technology allows machines to read, understand, and interpret human language. NLP uses statistical methods and semantic programming to understand grammar and syntax, and, in some cases, the emotions of the writer or those interacting with a system like a chat bot.

- Machine perception. Over the last few years, enormous advances in sensors — cameras, microphones, accelerometers, GPS, radar and more — have powered machine perception, which encompasses speech recognition and computer vision used for facial and object recognition.

- Robotics. Robot devices are widely used in factories, hospitals and other settings. In recent years, drones have also taken flight. These systems — which rely on sophisticated mapping and complex programming—also use machine perception, to navigate through tasks.

- Social intelligence. Autonomous vehicles, robots, and digital assistants such as Siri and Alexa require coordination and orchestration. As a result, these systems must have an understanding of human behavior along with a recognition of social norms.

Methods of Artificial Intelligence

There are a number of approaches used to develop and build AI systems. These include:

- Machine Learning (ML). This branch of AI uses statistical methods and algorithms to discover patterns and “train” systems to make predictions or decisions without explicit programming. It may consist of supervised and semi-supervised ML (which includes classifications and labels) and unsupervised ML (using only data inputs and no human applied labels).

- Deep Learning. This approach relies on artificial neural networks (ANNs) to approximate the neural pathways of the human brain. Deep learning systems are particularly valuable for developing computer vision, speech recognition, machine translation, social network filtering, video games and medical diagnosis.

- Bayesian Networks. These systems rely on probabilistic graphical models that use random variables and conditional independence to better understand and act on the relationships between things, such as a drug and side effects or darkness and a light switch turning on.

- Genetic Algorithms. These search algorithms tap a heuristic approach modeled after natural selection. They use mutation models and crossover techniques to solve complex biological challenges and other problems.

Uses of Artificial Intelligence

There is no shortage of compelling use cases for AI. Here are some leading examples:

Healthcare

Artificial intelligence in healthcare can play a leading role. It enables health professionals to understand risk factors and diseases at a deeper level. It can aid in diagnosis and provide insight into risks. AI also powers smart devices, surgical robots and Internet of Things (IoT) systems that support patient tracking or alerts.

Agriculture

AI is now widely used for crop monitoring. It helps farmers apply water, fertilizer and other substances at optimal levels. It also aids in preventative maintenance for farm equipment and it is spawning autonomous robots that pick crops.

Finance

Few industries have been transformed by AI more than finance. Today, quants (algorithms) trade stocks with no human intervention, banks make automated credit decisions instantly, and financial organizations use algorithms to spot fraud. AI also allows consumers to scan paper checks and make deposits using a smartphone.

Retail

A growing number of consumer-facing apps and tools support image recognition, voice and natural language processing and augmented reality (AR) features that allow consumers to preview a piece of furniture in a room or office or see what makeup looks like without heading to a physical store. Retailers are also using AI for personalized marketing, managing supply chains, and cybersecurity.

Travel, Transportation and Hospitality

Airlines, hotels, and rental car companies use AI to forecast demand and adapt pricing dynamically. Airlines also rely on AI to optimize the use of aircraft for routes, factoring in weather conditions, passenger loads and other variables. They can also understand when aircraft require maintenance. Hotels are using AI, including image recognition, for deploying robots and security monitoring. Autonomous vehicles and smart transportation grids also rely on AI.

Benefits & Risks Artificial Intelligence

For businesses, it’s not a question of whether to use AI — many organization already taps into it on a daily basis —it’s a question of how to maximize the benefits and minimize the risks.

As starting point, it’s essential to know how and where AI can improve business processes and build a workforce that understands what artificial intelligence is, where it fits in and what opportunities it offers. This may require workers to have new knowledge and skills – and AI salaries are competitive – along with a rethinking of service providers, workflows and internal processes.

Artificial intelligence serves up other challenges. One of the biggest stumbling points for AI, including machine learning and deep learning, is poorly constructed frameworks. When users train models with bad data or construct flawed statistical models, incorrect and even dangerous outcomes often follow.

AI tools, while increasingly easy to use, require data science expertise. Other important factors include: ensuring there’s enough computing power and the right cloud-based infrastructure in place, and, mitigating fears about job loss.

In any case, artificial intelligence is introducing bold opportunities to create smarter and more powerful machines. In the years ahead, AI will certainly further transform business and life.